Introduction

The era has arrived where your phone or computer can understand the objects of an image, thanks to technologies like YOLO and SAM.

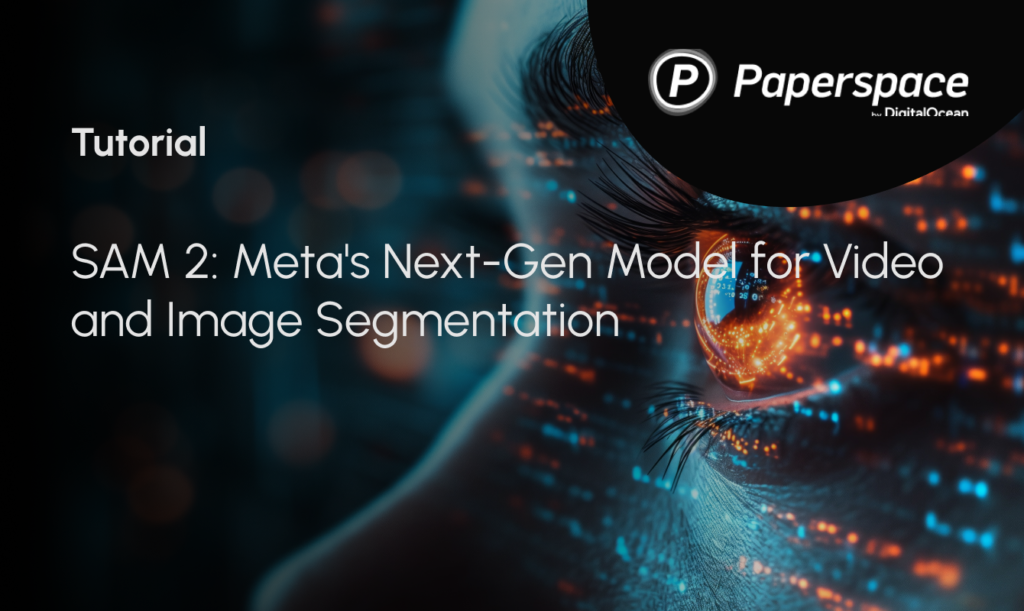

Meta’s Segment Anything Model (SAM) can instantly identify objects in images and separate them without needing to be trained on specific images. It’s like a digital magician, able to understand each object in an image with just a wave of its virtual wand. After the successful release of llama 3.1, Meta announced SAM 2 on July 29th, a unified model for real-time object segmentation in images and videos, which has achieved state-of-the-art performance.

SAM 2 offers numerous real-world applications. For instance, its outputs can be integrated with generative video models to create innovative video effects and unlock new creative possibilities. Additionally, SAM 2 can enhance visual data annotation tools, speeding up the development of more advanced computer vision systems.

What is Image Segmentation in SAM?

Segment Anything (SAM) introduces an image segmentation task where a segmentation mask is generated from an input prompt, such as a bounding box or point indicating the object of interest. Trained on the SA-1B dataset, SAM supports zero-shot segmentation with flexible prompting, making it suitable for various applications. Recent advancements have improved SAM’s quality and efficiency. HQ-SAM enhances output quality using a High-Quality output token and training on fine-grained masks. Efforts to increase efficiency for broader real-world use include EfficientSAM, MobileSAM, and FastSAM. SAM’s success has led to its application in fields like medical imaging, remote sensing, motion segmentation, and camouflaged object detection.

Dataset Used

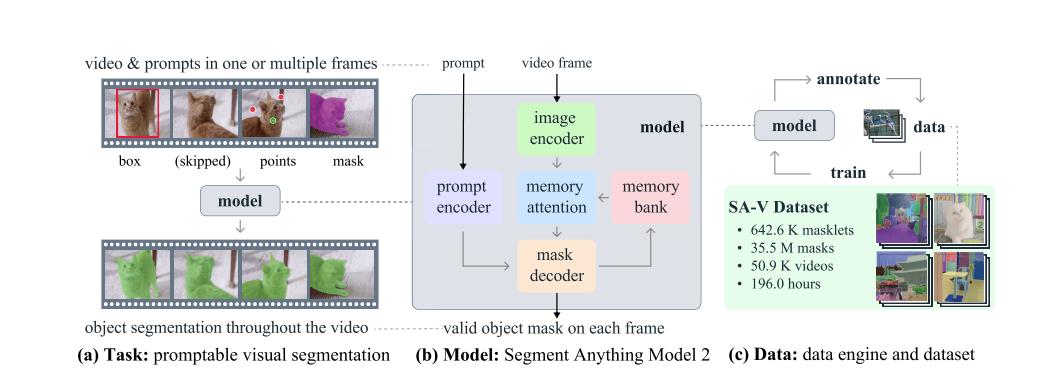

Many datasets have been developed to support the video object segmentation (VOS) task. Early datasets feature high-quality annotations but are too small for training deep learning models. YouTube-VOS, the first large-scale VOS dataset, covers 94 object categories across 4,000 videos. As algorithms improved and benchmark performance plateaued, researchers increased the VOS task difficulty by focusing on occlusions, long videos, extreme transformations, and both object and scene diversity. Current video segmentation datasets lack the breadth needed to ” segment anything in videos,” as their annotations typically cover entire objects within specific classes like people, vehicles, and animals. In contrast, the recently introduced SA-V dataset focuses not only on whole objects but also extensively on object parts, containing over an order of magnitude more masks. The SA-V dataset collected comprises of 50.9K videos with 642.6K masklets.

Model Architecture

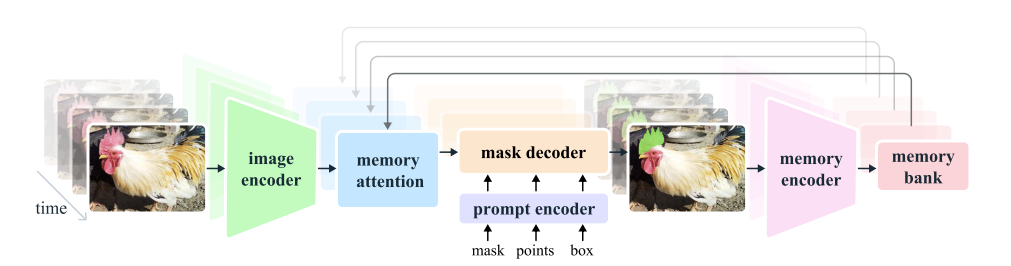

The model extends SAM to work with both videos and images. SAM 2 can use point, box, and mask prompts on individual frames to define the spatial extent of the object to be segmented throughout the video. When processing images, the model operates similarly to SAM. A lightweight, promptable mask decoder takes a frame’s embedding and any prompts to generate a segmentation mask. Prompts can be added iteratively to refine the masks.

Unlike SAM, the frame embedding used by the SAM 2 decoder isn’t taken directly from the image encoder. Instead, it’s conditioned on memories of past predictions and prompts from previous frames, including those from “future” frames relative to the current one. The memory encoder creates these memories based on the current prediction and stores them in a memory bank for future use. The memory attention operation uses the per-frame embedding from the image encoder and conditions it on the memory bank to produce an embedding that is passed to the mask decoder.

Here’s a simplified explanation of the different components and processes present in the image:

Image Encoder

- Purpose: The image encoder processes each video frame to create feature embeddings, which are essentially condensed representations of the visual information in each frame.

- How It Works: It runs only once for the entire video, making it efficient. MAE and Hiera extracts features at different levels of detail to help with accurate segmentation.

Memory Attention

- Purpose: Memory attention helps the model use information from previous frames and any new prompts to improve the current frame’s segmentation.

- How It Works: It uses a series of transformer blocks to process the current frame’s features, compare them with memories of past frames, and update the segmentation based on both. This helps handle complex scenarios where objects might move or change over time.

Prompt Encoder and Mask Decoder

- Prompt Encoder: Similar to SAM’s, it takes input prompts (like clicks or boxes) to define what part of the frame to segment. It uses these prompts to refine the segmentation.

- Mask Decoder: It works with the prompt encoder to generate accurate masks. If a prompt is unclear, it predicts multiple possible masks and selects the best one based on overlap with the object.

Memory Encoder and Memory Bank

- Memory Encoder: This component creates memories of past frames by summarizing and combining information from previous masks and the current frame. This helps the model remember and use information from earlier in the video.

- Memory Bank: It stores memories of past frames and prompts. This includes a queue of recent frames and prompts and high-level object information.

SAM 2 helps the model keep track of object changes and movements over time. The model is trained to handle interactive prompting and segmentation tasks using both images and videos. During training, the model learns to predict segmentation masks by interacting with sequences of frames and receiving prompts like ground-truth masks, clicks, or bounding boxes to guide its predictions. This training process helps the model become adept at responding to various types of input and improving its segmentation accuracy.

Overall, SAM 2 is designed to efficiently handle long videos, remember information from past frames, and accurately segment objects based on interactive prompts.

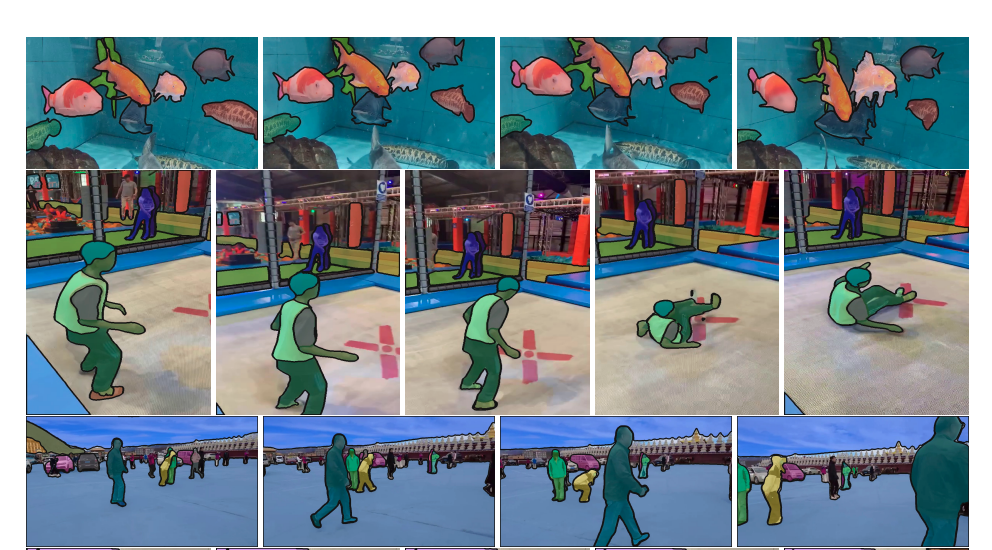

SAM 2 significantly outperforms previous methods in interactive video segmentation, achieving superior results across 17 zero-shot video datasets and requiring about three times fewer human interactions. It excels in established video object segmentation benchmarks like DAVIS, MOSE, LVOS, and YouTube-VOS. With real-time inference at approximately 44 frames per second, SAM 2 is 8.4 times faster than manual per-frame annotation with SAM.

To install SAM 2, start by cloning the repository, moving to the folder, and installing the necessary requirements. Additional steps include downloading the checkpoints and setting up the SAM 2 predictor to run example notebooks.

SAM 2 can be used for image prediction and video prediction. The model supports segmenting objects in static images and videos, utilizing prompts like clicks, bounding boxes, or masks to define object boundaries. SAM 2 uses a memory mechanism for video segmentation, allowing accurate predictions across frames and iterative refinement by adding prompts to subsequent frames. The model processes frames in a streaming architecture, incorporating past frame data into current predictions and addressing ambiguity by generating multiple masks when prompts are unclear.

While SAM 2 performs well in segmenting objects, it may struggle with challenges like drastic changes in camera viewpoints, long occlusions, crowded scenes, object confusion, and fast-moving objects. Future improvements could enhance SAM 2’s performance, especially in challenging scenarios, and automate the data annotation process to boost efficiency. Please rewrite this sentence.